Manas Joshi had from an early age loved computer science. So, when he secured a marine engineering seat at the IITs, he preferred to give that up to join the computer science and engineering course at National Institute of Technology (NIT), Warangal.

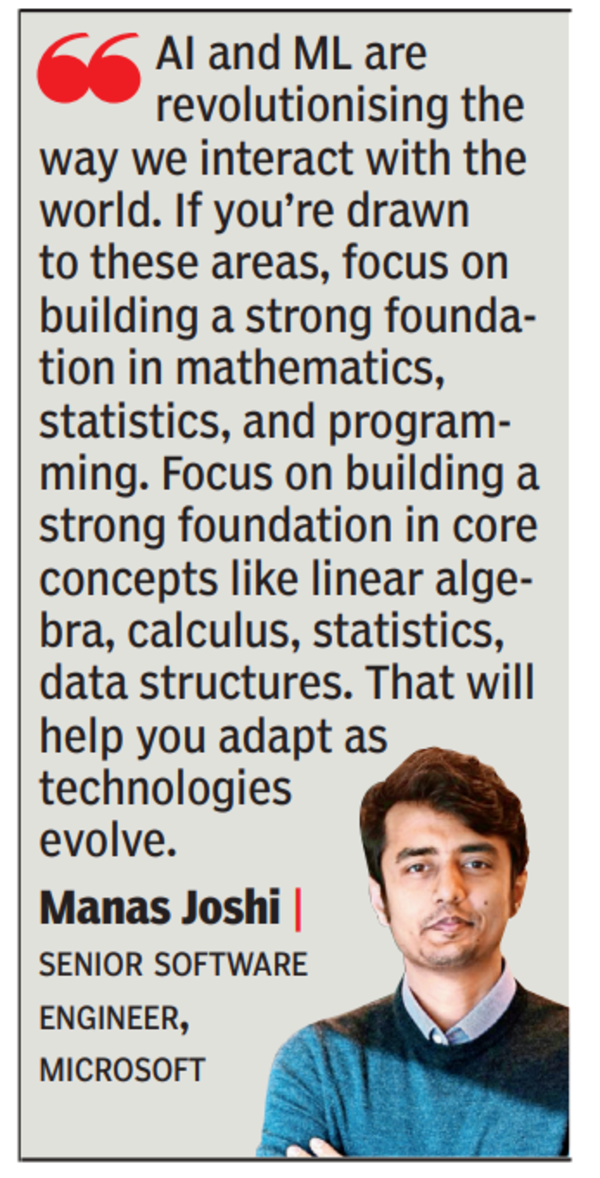

That paid off. He is today a senior software engineer at Microsoft in Redmond, US, with expertise in AI, ML and software engineering.He has been awarded the fellowship of the British Computer Society, and he’s a member of the Asia-Pacific Artificial Intelligence Association.

“The problem-solving techniques, logical thinking, and analytical skills developed during my computer science course have been invaluable in my role at Microsoft,” he says.

He joined Microsoft’s search and AI org in Hyderabad straight out of college. He worked on Bing Maps and Bing Shopping for four years, building expertise in AI, ML, and backend systems. When a position opened up in Microsoft US for someone with precisely such expertise, Manas applied. That led him to Microsoft’s headquarters in Redmond in 2019.

Manas has primarily worked on the Bing Maps and Search experience. He is re sponsible for handling the backend service that powers Bing Maps and which serves millions of queries per second. He helped develop a new algorithm for label density detection in Maps that won an award for the best research paper in ML at the data science and ML conference at Microsoft. “Labels on maps come in a variety of different shapes and sizes, and are often very difficult to detect. I created a new algorithm which improved this label detection by more than 30%,” he says.

He also led the development of a classifier using ML to identify product queries in real-time in Bing search. Whenever someone makes a query on the search engine, the query needs to be classi fied based on the intent of the query – is the user looking to buy a product, or is looking for news on the product? Manas’ work enabled the system to show the appropriate experience.

AI IN ACCESSIBILITY

Today, Manas is using his AI expertise to focus on accessibility issues. “Voice-activated technologies are convenient tools for the average user, but they’re game-changers for people with mobility issues. The ability to control one’s environment, lights, temperature, even sending a text through voice commands can offer a level of independence that wasn’t possible before,” he says. Natural language processing (NLP), he notes, is making strides in aiding those with learning disabilities like dyslexia. Advanced NLP algorithms can adapt text to be more readable for individuals, breaking down complex sentences and even offering synonyms for difficult words.

Computer vision technology, he says, is helping visually impaired individuals navigate public spaces safely.

Manas is also working on a personal project – based on his experience that many can’t afford the standard Humphrey visual field test for diagnosis of glaucoma, a condition that can lead to permanent blindness. “I’m trying to replicate the Humphrey test in a more accessible way by using VR and avoiding expensive medical equipment,” he says.

Originally Appeared Here