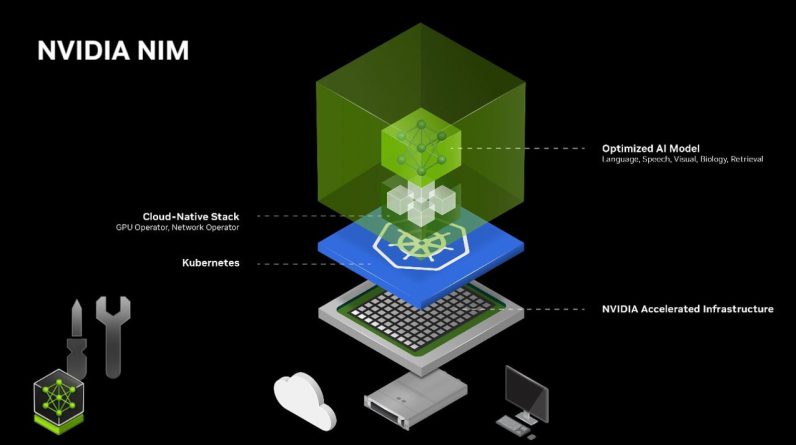

If you are searching for ways to deploy artificial intelligence and AI models efficiently and effectively. NVIDIA NIM (NVIDIA Inference Microservices) empowers developers, businesses, and enthusiasts to harness the full potential of accelerated AI with unparalleled ease and speed. With its suite of inference microservices designed specifically for NVIDIA GPUs, NIM streamlines the deployment process, allowing you to integrate innovative AI capabilities into your projects in a matter of minutes. All About AI has put together a great tutorial showing you step-by-step how you can deploy accelerated artificial intelligent projects quickly.

What is NVIDIA NIM?

NVIDIA NIM (Networking Interface Module) is a hardware component used in networking systems, particularly those designed for high-performance computing and data centers. These modules are part of NVIDIA’s networking solutions, aimed at providing high-speed, low-latency data transfer capabilities.

Key Features and Functions of NVIDIA NIM:

- High Bandwidth: NIMs are designed to support high-bandwidth data transfers, crucial for applications requiring rapid data movement, such as AI training and large-scale simulations.

- Low Latency: These modules are optimized for low-latency communication, which is essential in environments where timely data delivery is critical.

- Flexibility: NVIDIA NIMs support a variety of networking standards and can be configured for different types of network topologies and architectures.

- Integration with NVIDIA Ecosystem: They are designed to integrate seamlessly with other NVIDIA hardware and software solutions, such as their GPUs and networking software, providing a cohesive and efficient environment for data processing and networking.

Applications:

- Data Centers: Used in high-performance data centers to enhance networking performance and efficiency.

- AI and Machine Learning: Facilitates the rapid transfer of large datasets required for AI training.

- High-Performance Computing (HPC): Used in HPC environments where massive amounts of data need to be processed and transferred quickly.

Simplifying AI Deployment

NVIDIA NIM takes the complexity out of AI model deployment, providing a seamless and user-friendly experience. The inference microservices are carefully crafted to optimize performance on NVIDIA GPUs, ensuring maximum efficiency and reliability. Whether you’re an engineer looking to incorporate AI into your applications, an enterprise seeking scalable and secure solutions, or a hobbyist eager to experiment with the latest AI technologies, NIM caters to your needs.

One of the key advantages of NVIDIA NIM is its standardized APIs. These APIs act as a bridge between your existing systems and the powerful AI models, facilitating smooth integration and minimizing compatibility issues. With NIM, you can focus on leveraging the capabilities of AI rather than grappling with complex deployment procedures.

Unleashing the Benefits

Deploying AI models with NVIDIA NIM unlocks a host of benefits that can transform your projects:

- Speed and Efficiency: NIM’s streamlined deployment process minimizes downtime, allowing you to get your AI models up and running in record time. By leveraging the power of NVIDIA GPUs, you can experience accelerated inference performance, allowing real-time processing and faster response times.

- Security and Control: NIM offers secure deployment options, ensuring your sensitive data remains protected. With granular control over access and permissions, you can maintain the integrity and confidentiality of your AI models and the data they process.

- Flexibility: NIM adapts to your deployment preferences, whether you choose to deploy on dedicated servers, in the cloud, or on local machines. This flexibility allows you to optimize your infrastructure based on your specific requirements, scalability needs, and budget constraints.

Deploying Accelerated AI

Here are some other articles you may find of interest on the subject of artificial intelligence :

Extensive Model and Adapter Support

NVIDIA NIM features an impressive array of supported models and adapters, empowering you to leverage the most advanced AI technologies available. The platform includes pre-built engines such as the renowned Llama 3 and Mistol 7B models, which have demonstrated exceptional performance across various domains. Additionally, NIM seamlessly integrates with LoRA (Low-Rank Adaptation) adapters, allowing you to fine-tune and customize models to suit your specific use cases.

The API catalog in NVIDIA NIM serves as a centralized hub for accessing and managing different AI models. With just a few clicks, you can effortlessly swap and test models, allowing you to find the optimal fit for your project. This flexibility ensures that you can stay at the forefront of AI advancements and adapt quickly to evolving requirements.

Seamless Deployment in 5 Minutes

Deploying AI models with NVIDIA NIM is a breeze, even for those new to the world of AI. The process can be broken down into a few simple steps:

- Set Up Environment: Ensure that you have an NVIDIA GPU, a Linux operating system, CUDA drivers, Docker, and the NVIDIA Container Toolkit installed. These prerequisites lay the foundation for accelerated AI deployment.

- Use Docker: NIM uses the power of Docker containers to streamline the deployment process. By encapsulating AI models within containers, you can ensure consistent performance across different environments and easily manage dependencies.

- Integrate with APIs: NIM follows the familiar OpenAI API structure, making it intuitive for developers to integrate AI capabilities into their existing scripts and applications. With just a few lines of code, you can unleash the power of accelerated AI in your projects.

Enterprise-Grade Features

NVIDIA NIM goes beyond basic deployment, offering a range of features tailored for enterprise use:

- Identity Metrics: NIM provides robust identity tracking and management capabilities, allowing you to monitor and control access to your AI models. This ensures that only authorized users can interact with your deployed models, maintaining security and compliance.

- Health Check Data: NIM enables you to monitor the health and performance of your deployed models in real-time. With detailed metrics and logs, you can proactively identify and address any issues, ensuring optimal performance and reliability.

- Enterprise Management Tools: NIM seamlessly integrates with existing enterprise management tools, allowing you to manage your AI deployments within your established workflows. From monitoring to scaling, NIM provides the necessary hooks to align with your organization’s practices.

- Kubernetes Support: For large-scale deployments, NIM offers Kubernetes support, allowing you to leverage the power of containerization and orchestration. With Kubernetes, you can easily scale your AI models, handle high-traffic loads, and ensure high availability.

Unleashing AI

The applications of NVIDIA NIM are vast and diverse. Whether you’re building generative AI applications to create captivating content and artwork or deploying AI models in interactive environments such as games and simulations, NIM provides the tools and capabilities to bring your vision to life.

To get started with NVIDIA NIM, follow the detailed setup instructions provided in the documentation. With step-by-step guidance, you’ll be able to install and configure the necessary components quickly. Once your environment is ready, you can deploy your first AI model using the example scripts and commands provided, experiencing the power of accelerated AI firsthand.

As NVIDIA NIM continues to evolve, exciting developments are on the horizon. The team is actively exploring options for local deployment, bringing the capabilities of NIM closer to your development environment. Additionally, regular updates and new model additions ensure that you always have access to the latest and greatest in AI technology.

By harnessing the power of NVIDIA NIM, you can unlock the true potential of accelerated AI, pushing the boundaries of what’s possible in your projects. With its simplicity, speed, and flexibility, NIM empowers you to deploy AI models with confidence, transforming the way you innovate and create. Embrace the future of AI with NVIDIA NIM and experience the transformative impact it can have on your endeavors.

Video Credit: Source

Filed Under: Hardware, Top News

Latest Geeky Gadgets Deals

If you buy something through one of these links, Geeky Gadgets may earn an affiliate commission. Learn about our Disclosure Policy.

Originally Appeared Here